Evaluating the structural transition from tightly coupled full-stack legacy suites to API-first decoupled environments and the resulting impact on deployment velocity and infrastructure complexity.

Key Takeaways (TL;DR)

- Infrastructure Decoupling: Transitioning from monolithic vs headless reduces deployment interdependency, allowing frontend teams to iterate without triggering full-stack rebuilds.

- API Latency Risks: Headless environments introduce overhead; without edge-side caching and optimized GraphQL fragments, API latency can increase TTFB by up to 40% compared to native SSR.

- TCO Dynamics: While headless reduces “vendor lock-in,” the cumulative TCO often increases due to the requirement for managing multiple specialized services (CMS, Search, Checkout).

- Scalability: API-first architectures enable independent horizontal scaling of high-load services, essential for enterprises managing over 1,000 concurrent checkout sessions.

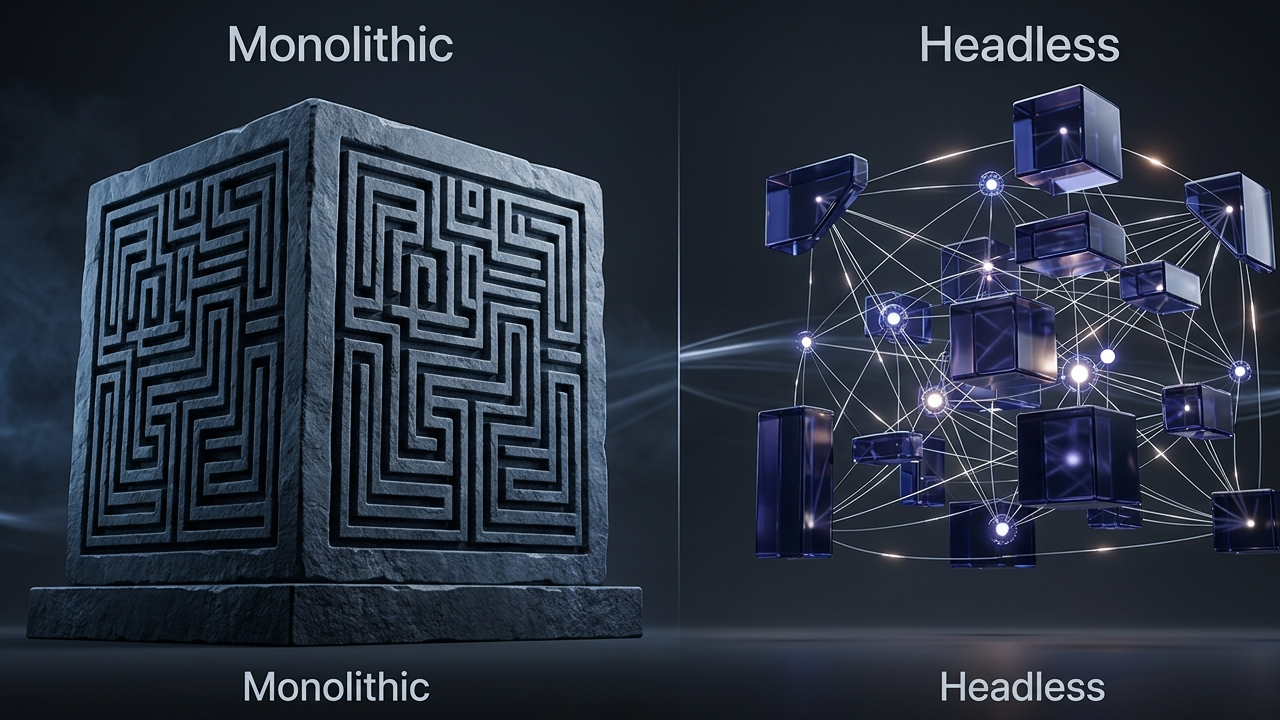

The debate surrounding monolithic vs headless architecture has shifted from a matter of “if” to “how” for enterprise-level organizations. In a traditional monolithic setup, the frontend (presentation layer) and backend (business logic, database, and admin) are unified in a single codebase. While this simplifies state synchronization and initial development, it creates a rigid environment where innovation is throttled by the slowest-moving component. Conversely, a headless storefront decouples these layers, communicating exclusively via APIs, typically within a MACH architecture framework (Microservices, API-first, Cloud-native, and Headless).

Architectural Taxonomy: Comparative Metrics

Choosing between these patterns requires a rigorous enterprise e-commerce TCO analysis. The monolithic approach is often sufficient for mid-market brands with stable requirements, but for global enterprises, the lack of scalability in monolithic systems becomes a critical failure point during peak traffic events.

| Architectural Attribute | Monolithic Suite | Headless (API-First) |

|---|---|---|

| Deployment Frequency | Weekly/Monthly (Single Deployment) | Daily/On-demand (Independent Services) |

| Data Fetching | Direct Database Query / Internal Calls | Asynchronous API Calls (REST/GraphQL) |

| Security Surface | Single point of failure (unified) | Distributed (requires Auth orchestration) |

| Frontend Tech Stack | Proprietary/Legacy (Liquid, JSP, Blade) | Modern Frameworks (Next.js, Remix, Vue) |

Performance and API Latency in Monolithic vs Headless

One of the most significant challenges in the monolithic vs headless transition is managing the network overhead. In a monolith, a page request is processed on the server with direct access to the data layer. In a headless configuration, the browser or a middle-layer server must orchestrate multiple API requests to fetch product data, CMS content, and customer sessions.

If the API latency of any single microservice exceeds 200ms, the cumulative impact on the user experience is catastrophic. To mitigate this, architects must adopt MACH architecture implementation patterns that leverage Edge Computing (e.g., Vercel Edge Functions or Cloudflare Workers) to cache API responses as close to the user as possible.

Code Implementation: Optimized GraphQL Fragment Fetch

To reduce the number of round trips in a headless setup, developers should avoid over-fetching by utilizing GraphQL fragments. This ensures only the required fields for the specific UI component are retrieved, minimizing the payload and reducing processing time:

// Fetching product details for a Headless Storefront using Apollo Client

import { gql } from '@apollo/client';

const PRODUCT_FRAGMENT = gql`

fragment ProductDetails on Product {

id

handle

title

priceRange {

minVariantPrice {

amount

currencyCode

}

}

images(first: 1) {

nodes {

url

altText

}

}

}

`;

export const GET_PRODUCT_QUERY = gql`

query getProduct($handle: String!) {

productByHandle(handle: $handle) {

...ProductDetails

descriptionHtml

variants(first: 10) {

nodes {

id

title

availableForSale

}

}

}

}

${PRODUCT_FRAGMENT}

`;

State Synchronization and Data Integrity

A secondary technical hurdle in the monolithic vs headless paradigm is maintaining state synchronization. In a monolithic system, the session state is usually managed by the server’s local memory or a shared Redis instance. In a headless environment, especially one utilizing multiple third-party services (e.g., a separate checkout service like Bold or Cart.com), keeping the cart state consistent across the storefront and the checkout engine requires robust webhook handling and event-driven architectures.

Failure to implement a strict event bus can lead to “ghost carts”—where a user sees items in their header that are no longer present in the checkout session. This is particularly prevalent in high-concurrency scenarios where inventory levels are volatile.

The Impact of Scalability on Long-term TCO

While the initial build-out of a headless system is more complex, its scalability offers a defensive advantage. In a monolith, scaling during a high-traffic event (like Black Friday) requires scaling the *entire* application, including underutilized modules like the admin panel or reporting tools. Headless allows you to scale only the storefront and search services, optimizing cloud spend and ensuring that 100% of available resources are directed toward the customer-facing API latency bottlenecks.

Architectural Outlook

In the next 18-24 months, the monolithic vs headless debate will be further complicated by the rise of “Composable Monoliths.” These are platforms that offer the out-of-the-box speed of a monolith with the API-first flexibility of a headless system. We anticipate a shift toward “Frontend-as-a-Service” (FEaaS) providers dominating the mid-market, while enterprise-grade MACH implementations will increasingly rely on AI-driven API orchestration to manage the complexity of multi-vendor state synchronization automatically. The focus will move away from basic connectivity toward optimizing the “interaction layer” between fragmented services.